Piloting a constructive feedback model for problem-based learning in medical education

Article information

Abstract

Purpose

Constructive feedback is key to successful teaching and learning. The unique characteristics of problem-based learning (PBL) tutorials require a unique feedback intervention. Based on the review of existing literature, we developed a feedback model for PBL tutorials, as an extension of the feedback facilitator guide of Mubuuke and his colleagues. This study was aimed to examine the perceptions of students and tutors on the feedback model that was piloted in PBL tutorials.

Methods

This study employed a qualitative research design. The model was tested in nine online PBL sessions, selected using the maximum variation sampling strategy based on tutors’ characteristics. All sessions were observed by the researcher. Afterwards, tutors and students in the PBL sessions were interviewed to explore their perceptions of the model.

Results

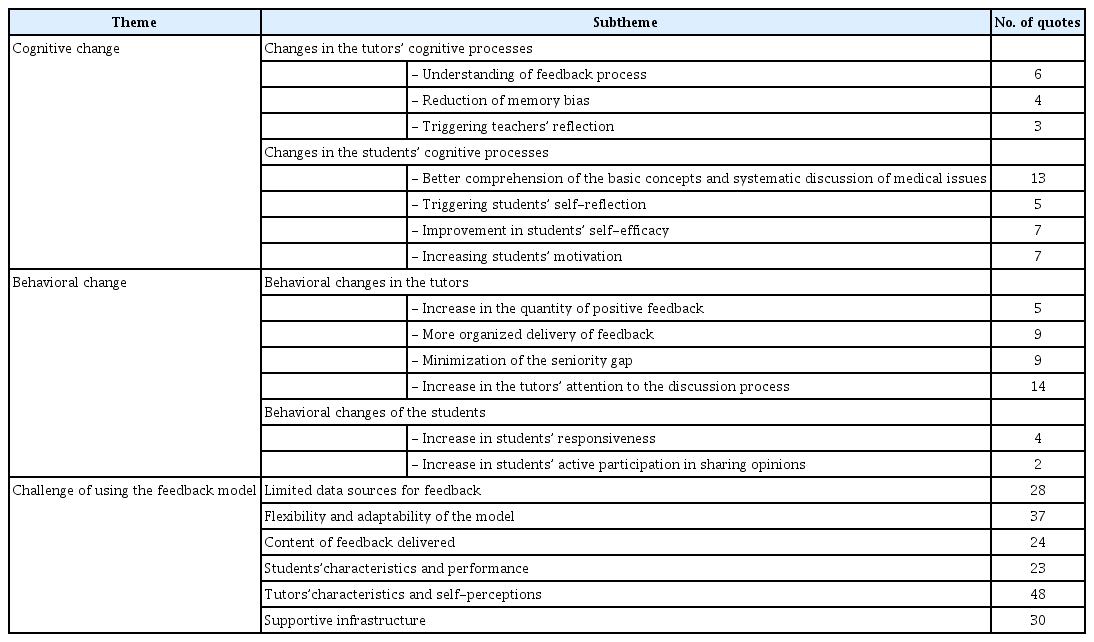

Three themes were identified based on the perceptions of the tutors and students: cognitive changes, behavioral changes, and challenges of the use of the feedback model. Both tutors and students benefited from improved cognition and behavior. However, the use of the feedback model still encountered some challenges, such as limited sources of feedback data, flexibility and adaptability of the model, content of feedback delivered, students’ characteristics and performance, tutors’ characteristics and self-perceptions, and supportive infrastructure.

Conclusion

The model can be used as a reference for tutors to deliver constructive feedback during PBL tutorials. The challenges identified in using the constructive feedback model include the need for synchronized guidelines, ample time to adapt to the model, and skills training for tutors.

Introduction

Constructive feedback plays an essential role in the teaching and learning process because it is a catalyst for students’ learning and performance. Feedback highlights and provides input on students’ improvements. Furthermore, feedback can increase students’ performance by increasing their awareness of their progress toward meeting the expected competency. Students are expected to use this information to perform self-reflection before deciding what to improve. Thus, feedback can increase students’ self-evaluation [1,2].

Multiple studies have suggested techniques for ensuring that both teachers and students benefit from feedback. These include improving the technique used for giving feedback, the teachers’ behavior, and the content of the feedback. Moreover, students can also improve how they receive feedback and the type of feedback they request as well as create a habit of seeking feedback [3,4]. Despite such efforts, students may still show resistance in responding to feedback. Such resistance may result when students focus on the technique and content of the feedback without trying to fully understand the perceptions of their teachers and peers [5,6].

In the pre-clinical phase of study, feedback can be provided in various learning activities, including problembased learning (PBL) tutorials. The PBL tutorial is known as a learning method that can significantly increase students’ desire to get feedback [7]. PBL was initially developed by McMaster University, and nowadays it is widely used in medical schools around the world [8]. Discussions can be conducted either face-to-face or synchronously through an online platform. Both face-to- face and online discussions are capable of meeting the basic PBL principles: constructive, independent, collaborative, and contextual [9]. A PBL session is usually facilitated by a teacher as the tutor. It is usually conducted in a small group (8–10 students) and follows certain steps in order for students to learn based on a problem in a trigger scenario [10].

One set of steps used to conduct PBL is the 7-jumps. The 7-jumps consists of reading the case and clarifying unclear terms or concepts, defining the problem, analyzing the problem using prior knowledge, ordering ideas and systematically analyzing them in depth, formulating learning objective, seeking additional information (individual learning), and synthesizing and testing the new information by sharing. Notwithstanding that the PBL steps do not specify how feedback should be delivered, the provision of inaccurate feedback, either in terms of the content, time, or methods of delivery, may obstruct the continuity of a discussion. Challenges in giving feedback also result from the unique characteristics of PBL, namely clear-cut discussion steps, group dynamic processes, and limited discussion time. Therefore, a tutor requires a specific feedback model that provides comprehensive guidance on the allocated time, content, and methods of giving feedback in PBL tutorials.

Currently, there are various methods to deliver verbal feedback, such as the sandwich model, SET-GO, Pendleton, ALOBA, and R2C2 [11-13]. In regards to PBL, a specific guideline to provide feedback in the discussion has been developed by Mubuuke et al. [14] based on the exploration of students’ experiences during PBL tutorials. The facilitator feedback guide consists of five domains in the PBL to which feedback can be directed, i.e., problem conceptualization and knowledge construction process, participation and teamwork, communication and interpersonal skills, time management and leadership, and self and peer evaluation. However, in a study on feedback within PBL by Darungan et al. [15], it was found that feedback had not been given frequently during PBL tutorials and students also preferred feedback that was balanced between positive and negative comments, focused on suggestions and improvements, and delivered through an interactive process without any superiority between teachers and tutors. Therefore, given that the facilitator feedback guide of Mubuuke et al. [14] contained only the feedback domain/content, it is necessary to add the method of feedback delivery to the feedback guide.

The proposed feedback model in the current study is an extension of the feedback guide from Mubuuke et al. [14] based on the analysis of existing literature regarding feedback provision (Table 1). The feedback model comprises of three main sections: opening discussion, main discussion, and closing discussion, in which the content and process of feedback delivery are explained at each section of the model. One of the approaches that is considered appropriate to deliver feedback is the educational alliance concept [16,17]. The principles embodied in educational alliance are the active participation from students and real interests of teachers towards students’ development. These principles are applied in the proposed feedback model by establishing an opening discussion section in which trust and close relationships between teachers and students are built and similar perceptions regarding feedback are obtained. Other literature such as Lipnevich and Smith [18] and Henderson et al. [19] highlighted the culture of feedback in higher and medical education in which more appreciation to students and facilitative dialogue are important. These are translated into the main discussion section of the feedback model where facilitative dialogue and appreciation are provided and emphasized throughout the PBL steps, and it is also further strengthened by a closing discussion section where appreciation is given to maintain students’ trusts. Furthermore, the written feedback model from Zelenski et al. [20] emphasizes the importance of closing the loop where students’ achievement is discussed and reflected, compared to the standards of performance and action plans to achieve the intended targets are formulated. Thus, in each step of the feedback model, tutors need to focus on making clear what students have achieved, the intended targets and how to get there. Lastly, the findings from the study of Fitri et al. [21] which has identified the critical events during PBL discussion such as inactive/dominant or unprepared students, are utilized to incorporate the feedback domain of Mubuuke et al. [14] into each step of PBL discussion to ensure appropriate timing for giving feedback and minimize those critical events.

Since the facilitator feedback guide developed by Mubuuke et al. [14] focuses only on the domain of feedback, and the study of Darungan et al. [15] demonstrates the need to also consider the principles and methods of providing constructive feedback, thus the current study is aimed at piloting a constructive feedback model appropriate for PBL tutorials. We argue that a feedback model specifically designed for PBL, which does not contain only the feedback domain, but also detailed explanations in relation to the method of feedback delivery is necessary to assist tutors in facilitating PBL tutorials more effectively and providing constructive feedback. It is expected that the proposed feedback model also functions to reduce gaps in the perceptions and expectations of feedback between tutors and students.

Methods

This study employed a qualitative research design to examine the perceptions of students and tutors on the feedback model. The feedback model used in this study was developed from the feedback model of Mubuuke et al. [14] with additional sections on feedback delivery methods, based on the analysis of existing literature. The model was translated into a guideline to be distributed to the study participants during the pilot phase.

1. Piloting the model

The pilot test of the model was conducted during online PBL sessions of an undergraduate program in the Faculty of Medicine Universitas Brawijaya in Indonesia from May to June 2020. There was no intervention done towards the PBL case scenario, learning issues, and the technicalities of the PBL steps or procedures. However, tutors involved in the discussion were given the guideline to deliver feedback based on the model. The PBL sessions, which employed the 7-jumps method, were conducted twice a week to discuss one trigger scenario. The time allocated for each discussion was 120 minutes. The discussion involved 10–12 students and was facilitated by one tutor. The maximum variation sampling method was used to select the PBL tutorial in which the model would be tested. The tutor participants varied based on gender, years of teaching experience, and academic qualifications (Table 2). Nine tutors were involved in this pilot test, three from each semester, focused on three topics: pharmacology in semester two, coronavirus disease 2019 in semester four, and cardiology in semester six. The student participants were chosen from the groups facilitated by the selected tutors.

2. Data collection

Both tutor and student participants were invited to interview sessions to explore their perceptions of the feedback model. Nine focus groups with student participants and nine in-depth interviews with tutors were conducted using an interview guide developed based on the literature review. All interview sessions were audio recorded and moderated by the authors, lasting between 30 to 120 minutes. The PBL sessions were video-recorded and observed by a member of the research team for data triangulation, who observed how the sessions progressed, took notes on the important features of the session, and specifically, identified compliance with the model.

3. Data analysis

The interviews and focus groups were transcribed verbatim, and a thematic analysis method was employed. The first author read and reread the transcripts to become familiar with the data and identified potential themes. Initial codes were given to the identified themes and were then reviewed and discussed with all authors. Detailed analysis of each theme was then conducted, and any newly emerged themes/subthemes were added [22].

4. Ethics statement

The study was granted ethical clearance by Universitas Indonesia (approval no., KET-100/UN2.FI/ETIK/PPM. 00.02/2020) and Universitas Brawijaya (approval no., 23/EC/KEPK/01/2020). The researchers obtained the participants’ consent prior to data collection and guaranteed data confidentiality.

Results

Based on the thematic analysis of the interview and focus group transcripts and observation findings, three main themes were identified: cognitive change and behavioral changes resulting from the use of the feedback model, and the challenges of using the feedback model. The list of themes and subthemes is provided in Table 3.

1. Cognitive changes

Many participants reported that the implementation of the model impacted their cognitive processes. Cognitive changes were reported in tutors as well as students. Tutors explained how the model assisted them in understanding where and when to give feedback, as well as the content of the feedback that should be delivered. In addition, tutors reported that the model reduced their memory bias and triggered them to think and reflect on their roles and responsibilities as facilitators in the PBL.

“…once they finished brainstorming [one of the steps in PBL]…[I know] that it is the right time for feedback, because previously the feedback was [given] at the end [of PBL session], it would eventually pile up and be forgotten.” (W9)

“The benefit [for using model] is that we become aware of our role towards students as facilitators.” (W2)

Based on the feedback delivered, the students expressed better comprehension of the materials being discussed. Student participants also mentioned that feedback received from tutors stimulated their self-reflection, and their self-efficacy and motivation to learn were also increased.

“[After getting feedback on PBL session] I tried to find it [the answer to the learning objectives] again afterwards because it turned out that my answer was not specific.” (F1)

“What the tutor mentioned at the beginning of the PBL made us focused in following the discussion in PBL, and we also gained knowledge and benefits from this discussion, so we understood the material discussed.” (F4)

2. Behavioral changes

Behavioral changes also occurred in tutors and students. Tutor participants highlighted that the model enabled them to pay more attention to the tutorial process. The feedback model also provided a more systematic and organized way to deliver feedback and encouraged tutors to give more positive feedback. The seniority gap between tutors and students was also narrowed since tutors were encouraged to provide constructive feedback. Tutors also reported that students were more active and responsive in the discussions. Some of the comments illustrating the behavioral changes are provided below.

“We’re [tutors] encouraged by “oh, feedback must be given soon in every step” so we must focus our attention on them [students].” (W6).

“There are many students who responded, fewer students are passive, and then students that are usually passive or quiet become more active to speak up.” (W3)

3. Challenges of using the feedback model

Aside from benefits of the feedback model on influencing cognitive and behavioral changes, we also observed the challenges related to the implementation of the feedback model. The implementation of the model was challenged by the limited sources of data for giving feedback. As the discussion sessions were held online due to the current pandemic, some tutors illustrated the difficulties in identifying students’ involvement in the discussion due to a lack of students’ online presence. Observation results showed that some students were allowed to be off-camera during discussions, while some on-camera students showed a lag, freeze, or bad angle picture.

“Conditioning the students to really focus on the discussion is hard. It [should] become their personal responsibility, because we do not know what the students are doing, especially with [their] cameras off…” (W6)

The use of the feedback model needed to take into account the time constraints of PBL sessions. Some participants also reported the difficulty in applying the model because too many interventions by tutors caused the discussion to be too formal and awkward, thus the flexibility and adaptability of the feedback model was observed as a challenge.

“Discussion time seemed prolonged, [it was] longer than usual. Since we are waiting for each other’s responses and following the steps so they [students] can speak up clearly, [thus] it limited the interaction.” (W4)

“In my opinion, it [giving feedback based on the model] is hard. I tend to push myself to intervene in each step when perhaps they do not need it. When the discussion has gone well, should we still give feedback, although it is positive feedback? Sometimes I find it uncomfortable to make many interventions.” (W6)

Another challenge was the influence of content expertise towards the feedback content. Even after the tutors were provided with guidance about the discussion materials, some highlighted their clinical experiences as a consideration when giving specific feedback.

“If I was tutoring a topic that is my area of expertise, I can give them [students] more. After discussion I can share my clinical experiences and many others. But if the topic is not my area of expertise, I cannot share much.” (W5)

Students’ characteristics and performance in the discussion were also the challenges identified when using the model. Almost all tutors considered students’ characteristics and performance, as well as the group dynamics during discussions, before deciding to give feedback at the scheduled time.

“When there are fewer problems, when the leader leads the discussion smoothly, it doesn’t make sense if I still give feedback, for example, “the leader seems passive”. And each leader has his/her own style, some give a conclusion in every step while some do not. That [different style] is not essential, so I do not give any feedback.” (W1)

During piloting of the model, some tutors reported some hesitation at first to use the model because it did not match their image. But once the tutors overcame their doubts, the model implementation resulted in a good response in the tutorials. The observation findings also showed that some tutors did not complete the step of building trust or did it awkwardly and rigidly.

“It burdens me because I am not used to being concerned with students’ business, but the burden is just in the first [meeting] and in the opening discussion. It’s my fault because I’m a person who is always thinking about my reputation.” (W2)

“Usually, I am worried that if I cut off their discussion, it might ruin the discussion atmosphere. But after I piloted the model yesterday, evidently, the atmosphere was not ruined. That means it can be done, but I had never tried it before.” (W1)

Supportive infrastructure was reported as an important factor in the implementation of the constructive feedback model, for example, the quality of the case scenario. Some participants also expected the model to be provided in a checklist-like format.

“If the case is well-developed, feedback will be easier to deliver.” (W1)

“If we tried it many times, we would just need a checklist, because we already memorized it [the feedback models steps]…” (W6)

Discussion

The constructive feedback model for PBL tutorials was developed based on literature and content review leading to the pilot test. In line with the study by Sargeant et al. [13], in which the authors utilized three main concepts to develop the R2C2 model, the current study also referred to previous studies and literature to formulate the model. This study involved relatively young teachers ranging from 29 to 40 years old. A small age gap leads to a sense of security for students in making mistakes. On the other hand, cognitive chemistry can make feedback delivery more relevant and specific to the students’ academic level and can be delivered in a simple language that is easy to understand [23].

1. Cognitive and behavioral changes

Our findings show that the feedback model has impacted on cognitive changes in both tutors and students. Using this model, they have the information on what and when to give feedback. The model is structured based on the stages of the PBL therefore it indicates the timing of feedback and which specific aspects should be the focus of feedback. We argue that such model benefits tutors in terms of cognitive changes. One of problems in feedback within PBL is feedback often so differs between groups and students then compare it [14], thus we believe that the model closes the cognitive gaps between tutors since they know when and what to give feedback on. The model also reduced memory bias since without it tutors must rely on their self-assessment and judgement on when to give feedback and this judgement may not always be accurate [24]. Overall, the model has enabled tutors to be more aware of their roles in PBL and giving feedback throughout the discussion. The feedback provided by the tutors based on the model has also led to cognitive changes in students. Feedback provided within PBL is aimed at ensuring students’ attainment of the learning objectives through mastering the content being discussed [14]. The model allows the delivery of specific, targeted and timely feedback which helps students to identify their knowledge deficits and close the gap. The feedback should be generated by observing students’ performance and ensuring that it matches the students’ competency levels [25]. The study also showed that using a constructive feedback model that provides students with external information on their mistakes encourage students to self-reflect. As stated by Hattie and Timperley [26] and Kluger and DeNisi [27], the constructiveness of feedback is shown by improved self-efficacy, which is followed by more effective self-regulation, so that students increase their commitment and effort in improving their performance.

The study findings also confirmed that behavioral changes occurred in tutors and students. The behavioural changes are demonstrated by the action and participation of tutors and students throughout the discussion. The study demonstrates that when using the model, tutors tend to give more regular positive feedback, pay more attention to their students, and minimize the seniority gap. Meanwhile, students become more responsive and active in giving their opinions. Such behaviors can lead to a more positive learning environment, which reduces students’ negative reactions to feedback [4]. A similar finding can be found in a study by Sara et al. [28], where feedback improved students’ motivation and performance.

Based on the educational alliance concept, the development of trust among parties is one of the most important keys to achieving constructive feedback [17]. For the current model to work, it required the development of trust throughout the sessions, from opening to closing. The current model has contributed to the formation of educational alliance between tutors and students, as proven by the findings in which students are more comfortable to give their opinions and improve their performance in the second discussion of PBL based on the feedback given.

2. Challenge of the use of the feedback model

Despite the benefits in terms of cognitive and behavioral changes, the use of the model still poses some challenges that should be taken into consideration when applying this model further or adapting it. The data sources for feedback and the model’s flexibility and adaptability are one of the most critical factors to consider when using the model. Data source is every information that tutors need to observe and obtain to be able to analyze and determine what feedback needs to be given and when. Although the model includes the information on the best timing and content of the feedback delivery, the difficulty of observing students’ performance online might lead to the reluctance of tutors in the current study to provide feedback and ascertain whether or not facilitative dialogue will occur. The literature shows that students’ performance during an online lesson was less optimal than during a face-to-face lesson [29], as it is in our study where students often turn off their cameras, while tutors are sometimes hesitant to intervene. A similar result was found in a study by Ng et al. [30], which showed less tutor intervention during an online discussion in PBL tutorials. Thus, extra effort is required to obtain data source and adjust the feedback delivery mode, for example, by checking students’ presence in the online discussion.

During the pilot, tutors must be familiar not only with the feedback delivery model but also with the online discussion characteristics, and it can take more than three to four tutorials to be accustomed to the model. Difficulties in transitioning from conventional to online learning methods were also found in a study conducted by De Jong et al. [31], where tutors suffered from fatigue, since they had to adjust to online discussions’ technicalities during the tutorials. In the current study, one of the technical problems was the need to take turns in giving opinions. The limited non-verbal response and the need to take turns giving opinions created inflexible behavior and discussion, which led to the necessity of extra time for brainstorming in online PBL discussions [9]. In the future, the feedback delivery model should be accompanied by training and guidelines to assist tutors in delivering feedback in different settings, including online.

The feedback model provided information on time allocation, content, and delivery methods. However, according to the respondents, feedback content can vary according to their own expertise, not the discussion materials. Therefore, a mastery of discussion materials, aside from their own expertise and clinical experience, is needed to improve tutors’ self-confidence when delivering feedback, especially negative feedback [32]. To minimize differences among tutors, faculty should provide tutors with more detailed case guidelines and discussion materials. The current study also showed that students’ characteristics and performance influence the use of the model. Students have a greater sense of ownership toward the feedback given to them when the tutors understand their characteristics [32]. Thus, a tutor needs to have information on students’ background, performance and achievements to be able to give specific feedback [33]. Such information is difficult to obtain in a learning process involving a large number of students, limited time, and an online delivery mode, similar to the tested model.

Our study demonstrates that tutors’ characteristics and self-perceptions, which also depend on their experiences and cultural background, were found to influence their use of the feedback delivery model. Some tutors in this study may want to maintain a strong hierarchical relationship in order to preserve their image and reputation as teachers, and this may inhibit the constructive feedback delivery process. Thus, it is important for tutors to understand the intention of feedback and align it with students’ goals [5]. The influence of tutors’ characteristics and selfperceptions can be minimized by providing appropriate training to facilitate PBL discussions and give constructive feedback [34].

The current study demonstrates that the availability of supportive infrastructure poses a challenge for the effective delivery of feedback during PBL tutorials. The sudden transition from face-to-face to online classes resulted in inadequate standard operating procedures for online discussion and poor synchronization between the case scenario and tutor guides. The quality of the scenario is also important since it can cause critical incidents in PBL, such as unequal participation in discussion or superficial discussion [21], which may limit the quality and quantity of feedback that can be given. Thus, tutors’ interventions during each of the PBL steps are needed to overcome this [35]. The current study also shows that examples and detailed explanations in the feedback model succeeded in providing a better understanding for tutors, but a simple checklist is worth considering, especially if the tutors are already well trained and accustomed to the model.

3. Future use of the feedback delivery model in PBL tutorials

The study revealed that the feedback delivery model can serve as a reference for providing constructive feedback during PBL tutorials. Tutors can use this model and combine it with case guidelines to deliver case-specific feedback during discussions. The model contributes to the cognitive and behavioral changes of both tutors and students by creating active discussion and a positive atmosphere. However, further support is necessary, especially for tutors. Tutors should be familiar with the feedback delivery models, undergo adequate training, and have sufficient time to adapt to the changes. If PBL tutorials are to be conducted online, faculty should provide clear guidelines to help tutors observe and assess students’ activities during discussions. Some improvements to the model are also necessary, such as transforming the model into a handier checklist, and matching the feedback content with the case discussion. Future studies could examine the use of the current model in face-to-face discussion and identify whether there are any similarities or differences in terms of the model’s benefits and challenges compared to those of online discussion.

challenges compared to those of online discussion.

We acknowledged several limitations of the current study. Firstly, the search for the literature that underlies the development of the model is not aimed to be exhaustive, thus may limit the sources of information for model development. However, the results of the searching process are considered to be informative for the model development, as they contributed to several components of the model. Secondly, as the piloting of a constructive feedback delivery model in PBL tutorials was performed in one of Indonesia’s medical institutions through qualitative data collection, the study results only depict the conditions at the time and place the study was conducted. However, this study has succeeded in developing and testing a constructive feedback model in PBL tutorials in which steps are conducted based on the common principles of PBL. Therefore, it is very likely that the feedback model developed by this study would be applicable to PBL tutorials in other institutions.

4. Conclusion

The current study piloted a constructive feedback model for PBL tutorials which has been developed through the process of literature and content review. The feedback model is proven to facilitate cognitive and behavioral changes, both in students and tutors, within, and after PBL tutorials. Various challenges of the use of the model have also been identified, including the existence of supportive infrastructure, tutors’ expertise, and students’ characteristics and performance. Adapting the feedback model to be used in medical schools’ PBL tutorials requires thorough consideration of all identified challenges and further studies to examine the adaptation of the model would be worthwhile. It is also worth exploring whether the model could also be feasible in face-to-face PBL and yield similar or different benefits and challenges.

Acknowledgements

The authors would like to thank all participants involved in this study.

Notes

Funding

The study was funded by Universitas Indonesia Grants for International Publications (no., NKB-2293/UN2.RST/HKP.05.00/2020).

Conflicts of interest

No potential conflict of interest relevant to this article was reported.

Author contributions

DRP initiated the study. NW and DS together with DRP designed the whole study. DRP led the data collection and analysis. DRP, NW, and DS involved in the data analysis and interpretation. DRP and DS drafted the manuscript. All authors involved in the significant revision of the manuscript. All authors approve the final version of the manuscript.